Trust at Scale: How raNora Adds Guardrails, Governance, and Traceability to AI

Operators are right to be cautious about AI in network operations. Everyone has seen demos that look impressive but break down in real environments because:

- Data is fragmented across vendors and generations,

- Teams don’t trust AI outputs,

- And LLMs can generate confidence but incorrect answers.

raNora was designed with these realities in mind. It’s not “AI glued onto telecom.” It’s a governed agentic system built for operational credibility.

The core principle: AI should be repeatable, auditable, and constrained

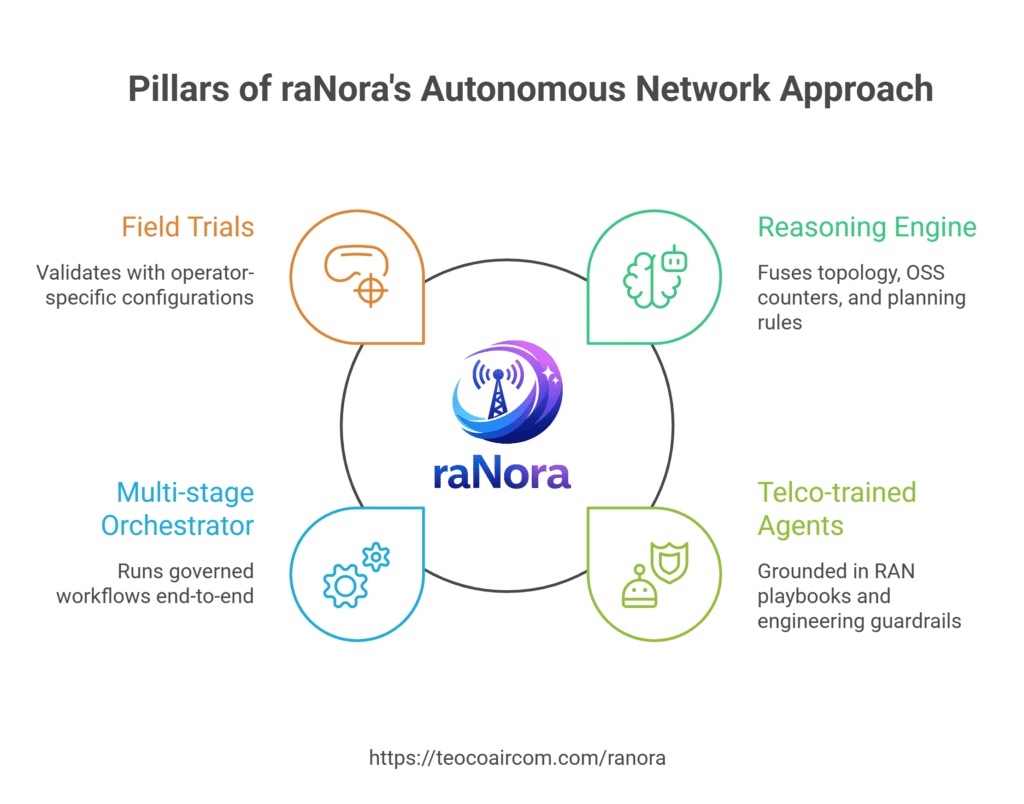

raNora’s Autonomous Network approach combines four pillars:

- A Reasoning Engine that fuses topology, OSS counters, and planning rules

- Telco-trained agents grounded in RAN playbooks and engineering guardrails

- A multi-stage orchestrator to run governed workflows end-to-end

- Field trials to validate with operator-specific configurations

This is what turns a “chat” into “outcomes”, with humans supervising exceptions while agents execute repeatable runbooks.

Under the hood: a framework built for tool-using agents

The raNora framework includes:

- User policy & resource manager

- Thread manager

- LLM + RAG layer

- Agent tool engine

- Human-in-the-loop

- A portfolio of raNora AI agents

- An orchestrator agent system

- And a reasoning engine with components like query building, analysis, multi-stage translation, and stateful I/O.

It also integrates with operator systems and planning workflows (OSS/CTR/MME, CM/PM/FM, geographic data) and leverages Aircom’s knowledge base and tools.

Why this matters for adoption

Governance + traceability are the difference between:

- “Interesting PoC” and

- “Trusted production workflow”

When an agent can explain its reasoning path, show what data it used, and produce repeatable outputs inside controlled workflows, adoption accelerates—and trust becomes a measurable engineering property, not a leap of faith.

If your organization has AI pilots stuck in PoC mode, raNora is built to help you cross the gap—from experimentation to operational deployment.

Write to us at raNora@teoco.com or contact your local Aircom representative.

How can we help?

For over 30 years, Aircom has helped network operators run state-of-the-art mobile networks and profitable businesses. Learn how we can help you in the areas critical to the success of modern CSPs.